There is a specific kind of frustration that comes from finding out an AI gave you a confident, well-written answer that was wrong.

Not partially wrong. Specifically, plausibly, wrongly wrong.

It happened to a colleague who used ChatGPT to look up a clinical trial result. The response cited a real researcher, a real institution, and a specific percentage that did not exist in any paper.

It took fifteen minutes to discover the fabrication because everything around it was accurate.

This is the hallucination problem, and in 2026, it has not been solved by any of the three major AI tools. But the rate at which each model produces false information varies significantly.

TL;DR: Claude has the lowest hallucination rate among the three major models in 2026, estimated at approximately 3 percent on factual queries, compared to GPT-5.5 and Gemini 3.1 Pro both near 6 percent. Claude is more likely to say it does not know rather than invent an answer. Gemini has a practical advantage on recent events because of its Google Search grounding. For research, citations, or any task where being wrong matters, Claude is the most reliable starting point.

What the current benchmarks show

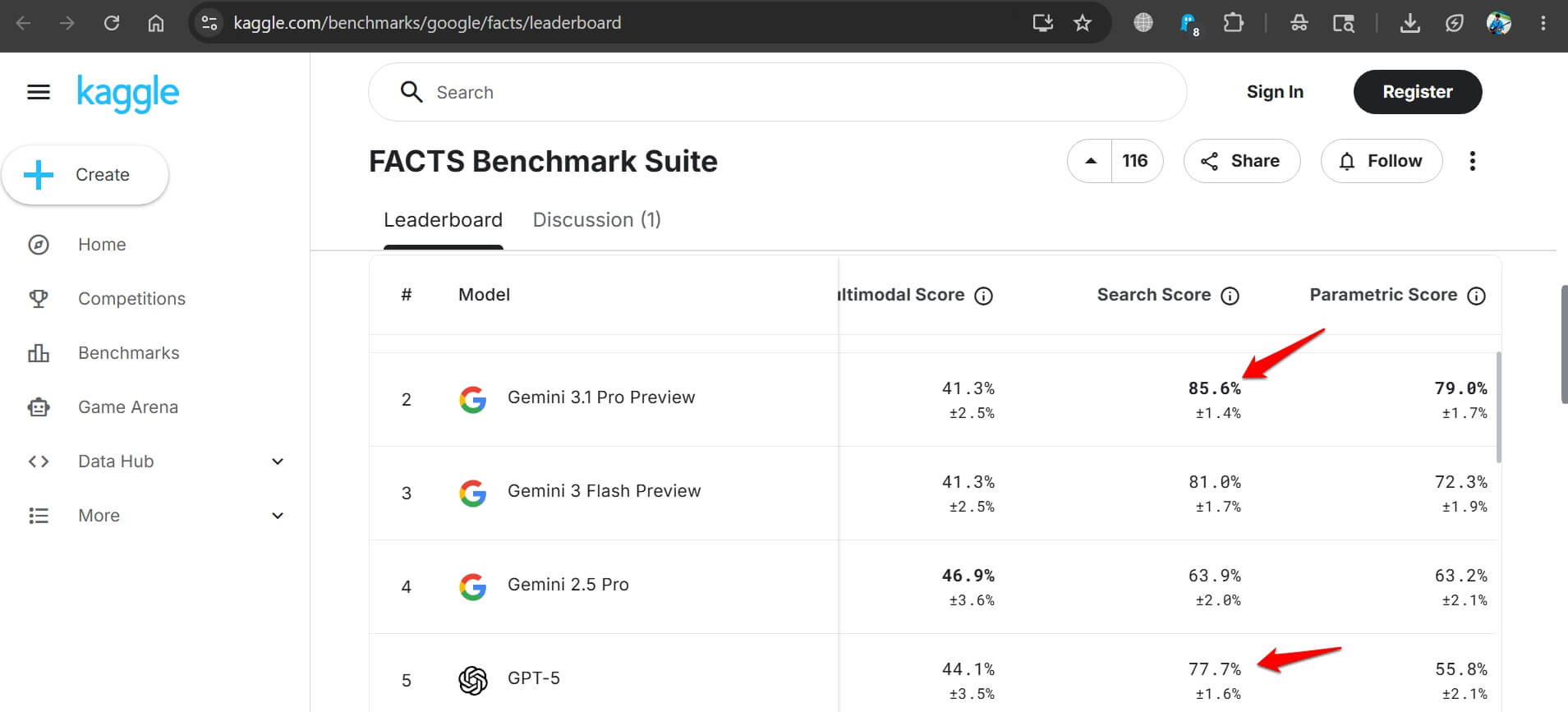

Independent evaluations in 2025 and 2026 have tested hallucination rates across several dimensions, including factual recall, citation accuracy, and summarisation faithfulness.

On pure factual accuracy, Claude consistently scores best.

One widely cited 2026 comparison found Claude’s hallucination rate at approximately 3 percent on factual queries, with GPT-5.5 and Gemini 3.1 Pro both sitting near 6 percent.

It is not the perfect AI tool by any means. Claude responds Your conversation is too long is a known issue that occurs when the context window of the model hits the text limit in a conversation.

The reason for Claude’s low hallucination rate is structural. Anthropic trained Claude with Constitutional AI, a method that includes evaluating outputs against a set of principles before returning them.

One practical result is that Claude is more likely to flag uncertainty rather than paper over it with a fluent but invented answer.

There is an important caveat. Reasoning mode changes this picture.

The FACTS benchmark from December 2025 found that every reasoning model tested, including GPT-5.5 with thinking enabled, Claude with extended thinking, and Gemini 3.1 Pro reasoning mode, exceeded 10 percent hallucination on grounded summarisation tasks.

Reasoning mode helps with complex logic but makes models more likely to drift from source material. Disable it for extraction and summarisation work.

Where Gemini has a real advantage

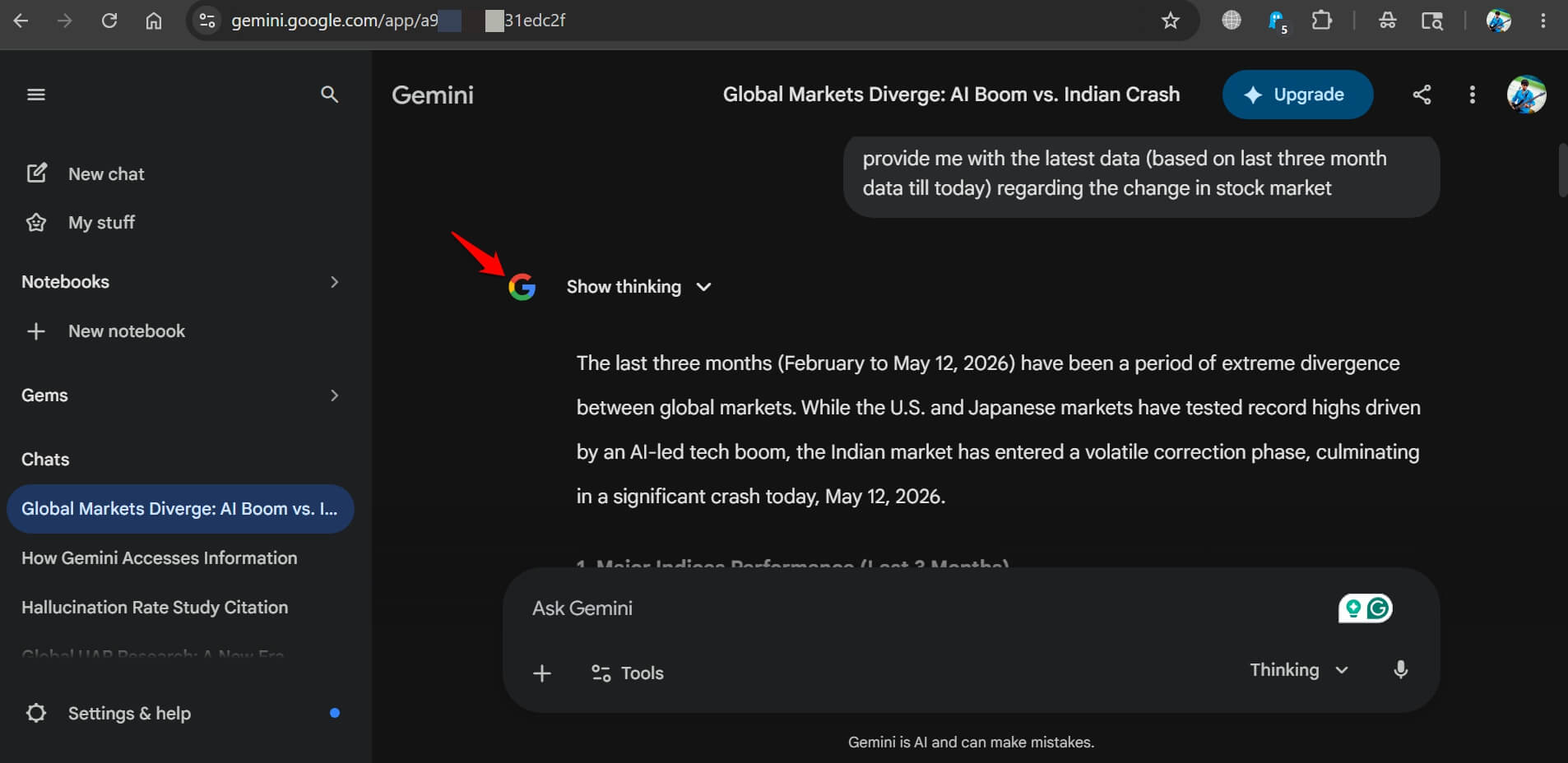

Gemini 3.1 Pro connects to Google Search by default, which changes the accuracy equation for current events and recent information entirely.

When a model can look something up rather than rely on training data, the hallucination rate on recent facts drops sharply.

Gemini utilizes “Base” and “Live” Brains to fetch information to the user.

The Base brain of Gemini comes into action to facilitate the core intelligence that comes from the dataset upon which it was trained. The context, tone, and reasoning in a query use the Base brain.

Gemini uses Live Brain when you want information with up-to-the-minute accuracy.

Gemini understands that the training data won’t suffice to produce the latest information. So, it puts Google search to use to fetch the latest information. This makes the information grounded and useful.

We used Gemini across various Google products to see how useful the AI tool was. It did pretty well.

The FACTS benchmark shows Gemini 3.1 Pro hitting 83.8 percent accuracy on search-enabled factuality tasks, compared to GPT-5.5 at 77.7 percent. Claude’s search-enabled score falls below both.

The practical implication is clear.

For questions about things that happened in the past six months, current prices, recent product releases, or anything that changes frequently, Gemini is the more reliable tool because it checks rather than remembers.

For questions about stable facts, historical information, analysis of documents you provide, or anything where the answer does not change, Claude’s lower base hallucination rate gives it the edge.

The citation problem

All three models can produce convincing-looking citations that do not exist.

The rate varies, but none of the AI tools can be trusted to generate academic or journalistic citations without verification.

Claude is less likely to invent a citation than GPT-5.5 or Gemini when asked to support a claim.

But less likely does not mean never. If you are using any AI tool for research that requires verified sourcing, every citation needs to be checked against the original source regardless of which model produced it.

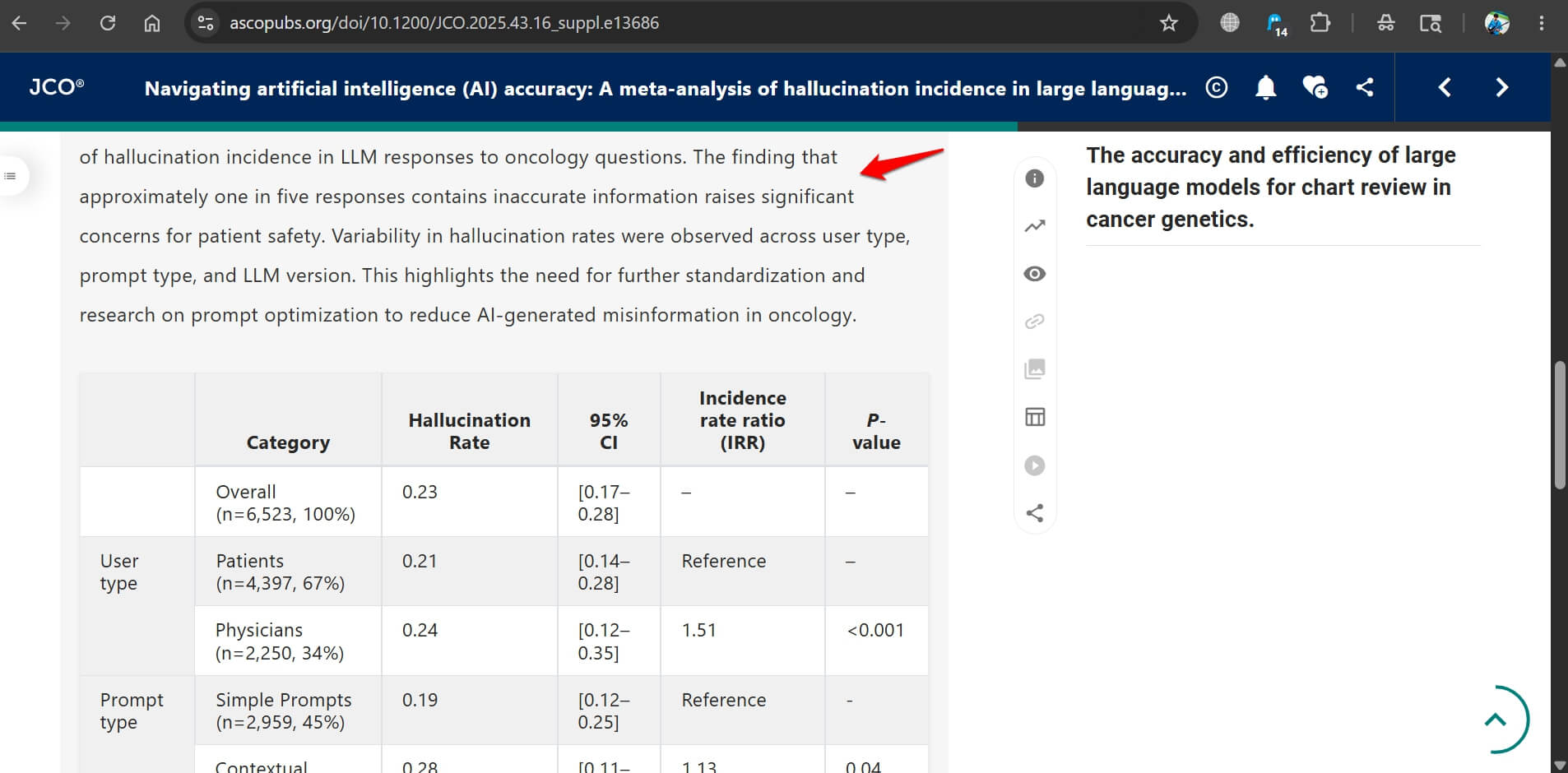

A 2025 study of GPT-4 in a clinical context found hallucinated details in one out of five responses to diagnostic prompts. That rate applies to the consumer tools as well when asked about specific, technical, or recently published information.

How to get more accurate answers from any model

You may follow a few habits to reduce hallucination risk across all three AI tools.

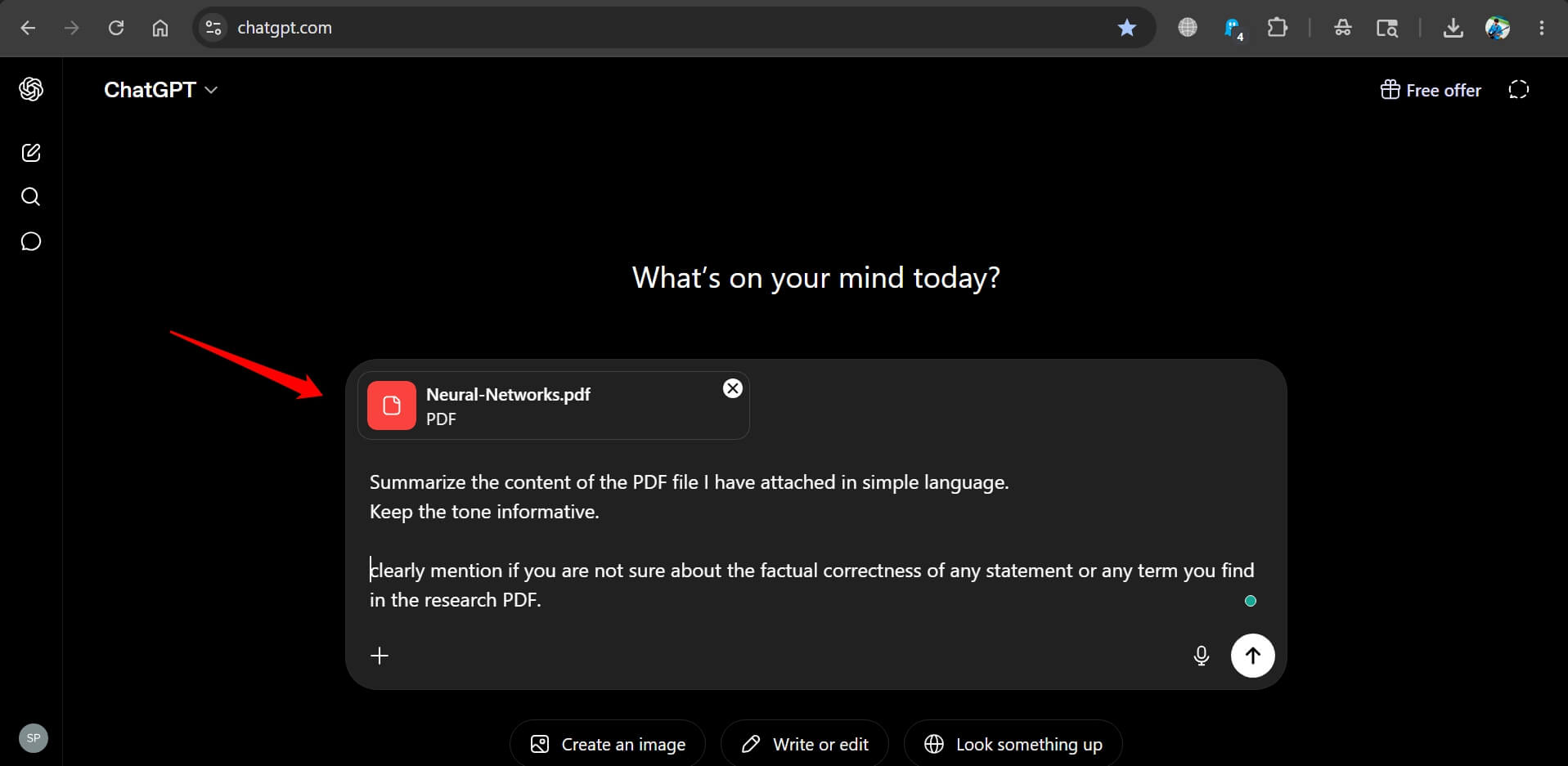

Upload the source material rather than asking the model to recall it is the most effective single change.

A model summarising a document you have provided will be significantly more accurate than a model recalling the same document from training data.

Asking the model to flag uncertainty is also useful.

Prompting with phrases like “tell me if you are not sure” produces noticeably more hedged and accurate output than open-ended queries that reward confident answers.

Disabling reasoning mode for factual extraction tasks reduces the summarisation drift described above.

Who should care most about this

For casual use, a 3 percent versus 6 percent hallucination rate is unlikely to matter much. Most everyday tasks produce answers where a wrong detail is obvious or low-stakes.

For anyone using AI in work where errors have real consequences, the difference matters.

Legal research, medical information, financial analysis, and academic work are all areas where Claude’s conservative approach to uncertain information is a genuine practical advantage over the more fluent but less careful alternatives.

If you've any thoughts on Claude hallucinates less than GPT 5.5 and Gemini as the gap shows in real work, then feel free to drop in below comment box. Also, please subscribe to our DigitBin YouTube channel for videos tutorials. Cheers!