Siri has felt limited for years. Not broken, exactly, but narrow. It handles timers and reminders well enough, reads back your messages, and plays music on command. Ask it anything that requires actual reasoning and it tends to fall apart.

Apple is now rebuilding that foundation using Google’s Gemini models. A joint statement published by both companies in January 2026 confirmed a multi-year collaboration, and Google publicly reaffirmed the timeline at its Cloud Next event in April.

The upgraded Siri is expected to arrive as part of iOS 27, with Apple’s WWDC on June 8, 2026 set to show the first real details.

That sounds significant; it is. But there is a real gap between what Gemini can do as a standalone product and what Siri will deliver once Apple has shaped it to fit inside its own ecosystem and privacy model. That gap is where the interesting story sits.

TL;DR: Apple is using Google’s Gemini models to rebuild Siri from scratch, with the upgrade expected in iOS 27 this fall. Everyday interactions like follow-up questions, cross-app tasks, and context-aware requests should improve meaningfully. But Siri will not become a full Gemini replacement. Apple controls what the model can access, how much it can do, and where its limits sit, and those decisions are baked in by design.

Why Siri needed a Gemini-level overhaul

Apple first previewed a smarter Siri at WWDC 2024, showing the assistant reading your Mail, cross-referencing Messages, and understanding on-screen context. It looked convincing. It never shipped.

Apple officially delayed those features in March 2025, acknowledging it needed more time. Reports later described a Siri codebase so tangled that engineers had to start over on a large language model foundation. That rebuild is what the Gemini partnership now supports.

Apple is reportedly paying roughly $1 billion a year for a custom version of Gemini’s model, which reportedly uses a 1.2 trillion parameter architecture.

Apple’s current cloud-based Apple Intelligence models use around 150 billion parameters. The scale difference matters for what Siri will be able to understand and reason through.

The deal uses Apple’s existing infrastructure as a guardrail. Apple has said user data will not be shared with Google, and the models are expected to run on infrastructure Apple controls rather than Google’s general cloud servers, though the final architecture has not been fully confirmed.

What Gemini-powered Siri could actually improve

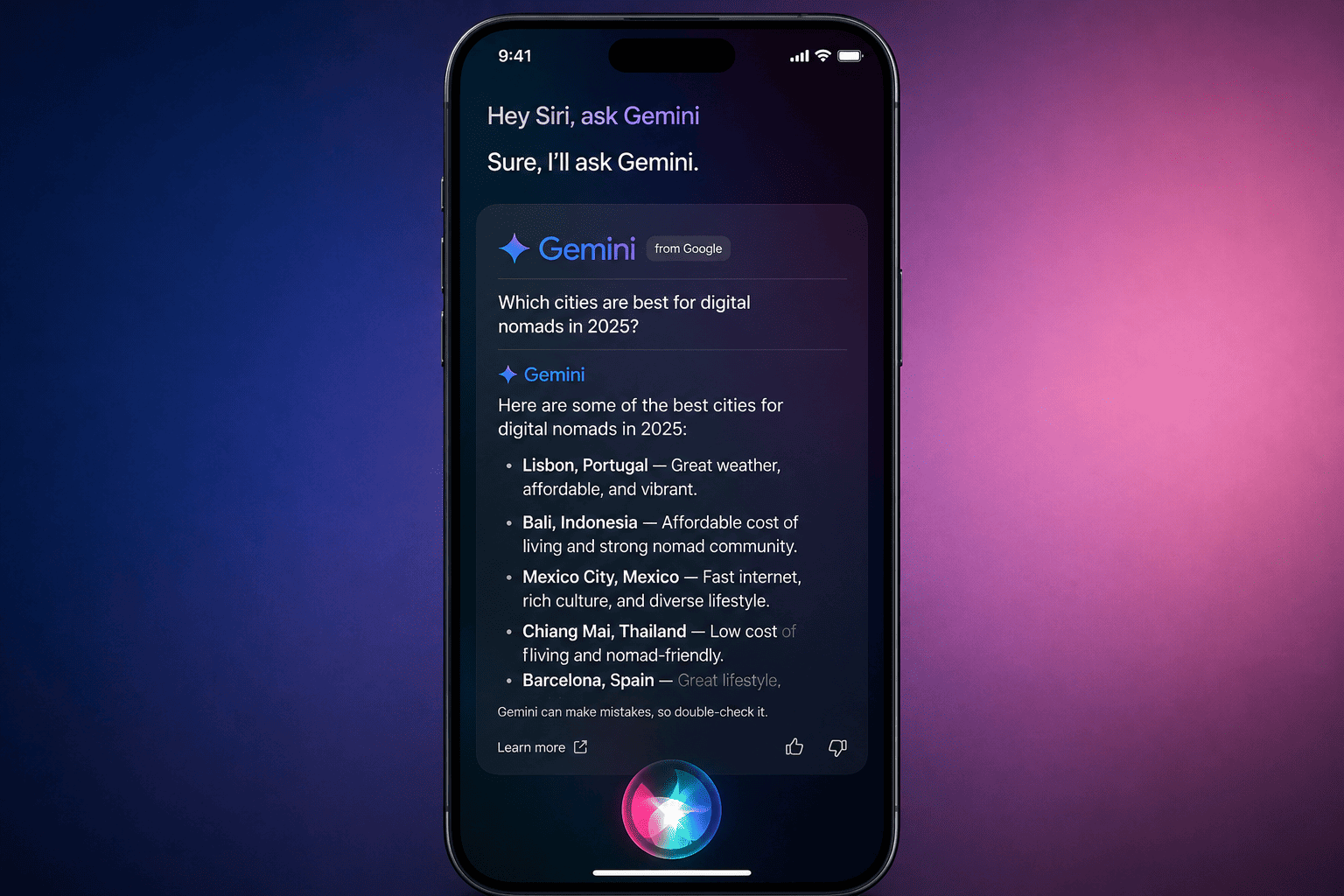

The clearest gains will show up in everyday conversation. Right now, Siri treats each query as isolated. Ask a follow-up question and it looks at you blankly. The Gemini-based Siri is expected to hold context across a back-and-forth conversation, which changes even simple interactions.

Instead of “Set alarm 7 AM” as a rigid command, you could say something like “Wake me up a bit earlier than usual, I have a meeting,” and Siri could actually work with that.

Cross-app awareness is the bigger practical shift. Multiple reports describe Siri being able to handle requests like “Summarize the last email from my manager” without you opening Mail manually. Or “Reply to this message but keep it short and professional,” with Siri drafting something coherent rather than bouncing you to the keyboard.

These are the kinds of interactions where the current Siri consistently disappoints.

Multi-step task handling is also expected. Combining a location-aware reminder with a contact and a time condition, without needing three separate commands, is the kind of thing Gemini’s architecture handles well. Whether Apple’s implementation actually delivers that is something we will not know until the betas land in June.

There is also a standalone Siri app reportedly in development for iOS 27, with persistent conversation history and a chatbot-style interface. If you have ever dealt with Siri’s errors mid-task, the idea of a dedicated interface with saved sessions is a meaningful quality-of-life change.

Where Siri will still feel limited

The honest read here is that Gemini on Android or the web and Gemini inside Siri are going to behave very differently. That is not a criticism of Apple. It is just how the product decisions work.

Ask the full Gemini to plan a three-day trip to a city with a budget and options, and you get a detailed, exploratory answer with links and alternatives. Siri, even with Gemini as its foundation, is expected to produce something shorter, more structured, and less open-ended. Apple is not building a freeform AI research tool but it is building a voice assistant with guardrails.

Real-time web knowledge is another genuine constraint. Gemini on the web pulls fresh search results dynamically. Siri’s version may handle known information well but feel more limited when you ask about something that happened last week.

Apple’s deal reportedly includes an AI-powered web search feature, but the depth and timeliness of those results compared to a live Gemini search remains unclear before iOS 27 ships.

Google ecosystem integration is also limited by design. Asking Gemini to summarize your Gmail threads works deeply inside Google’s own apps. Siri is not going to replicate that, even if Apple’s own Mail and Messages integration improves. If you rely on Gmail and Google Calendar, the benefit will be narrower than it sounds.

Ask “What’s the best phone under $500 right now?” Gemini gives a detailed comparison. Siri may give a shorter answer or need follow-ups.

The tiered architecture is worth understanding. Apple’s Q1 2026 earnings call, simple tasks will stay on-device using Apple’s own models. Moderate requests go to Apple’s Private Cloud Compute servers.

Heavy reasoning and world knowledge queries hit the custom Gemini model. Most users will never think about this routing, but it means the smartest version of Siri is also the one that depends on a network connection and Apple’s infrastructure holding up.

Early rollouts of Apple Intelligence features have had inconsistency problems. The notification summaries that shipped in iOS 18 sometimes generated inaccurate news headlines.

A Gemini-based Siri will be more capable, but the early versions may still feel uneven until Apple refines the routing and reliability over subsequent updates.

The privacy trade-off Apple is making

Apple is choosing a different set of priorities than Google does with its own Gemini product. The emphasis on on-device processing, Private Cloud Compute, and not sharing user data with Google means the model operates with less personal context than a fully cloud-connected AI assistant would have.

That produces safer, more predictable behavior. It also means Siri is unlikely to learn from your habits in the way that a more permissive system could.

You will probably not get suggestions based on patterns across all your apps unless Apple explicitly builds that feature in and gives you control over it.

For users who have always trusted Apple’s privacy-first approach, this is entirely consistent with what they expect. For users hoping for a deeply personalized AI that knows their routines, the current architecture has real limits.

What to realistically expect

If your main frustration with Siri is the basics: follow-up questions not working, poor cross-app handling, rigid command phrasing, the upgrade should be genuinely noticeable. These are the exact problems a Gemini-level LLM is suited to fix, and they are the ones Apple has been failing on for years.

If you are expecting ChatGPT-level depth, autonomous research, or the ability to chain complex multi-app automations end to end, the initial iOS 27 version is probably not going to satisfy that.

Siri’s chatbot could be competitive enough with Gemini 3, which is meaningful. Whether it holds up in real use is something no one outside Apple knows yet.

Most of the new features will also require an iPhone 15 Pro or newer to run Apple Intelligence at all. That is the same gate that has applied to earlier Apple Intelligence features, and it rules out a large portion of active iPhones worldwide.

WWDC on June 8 is where Apple will show its hand. The betas in June and July will tell us how it actually works. If you are curious about what else is coming for Apple’s assistant, the full iOS 27 AI features breakdown covers what has been reported across the board.

Siri is getting a real upgrade. Apple is just doing it on its own terms, and those terms matter as much as the underlying model does.

Frequently Asked Questions

Which iPhones will support the Gemini-powered Siri?

Based on Apple’s existing Apple Intelligence requirements, the new Siri features are expected to require an iPhone 15 Pro or newer. Older devices, including standard iPhone 15 models, are unlikely to support the full feature set.

Will my data be shared with Google when Siri uses Gemini?

Apple’s joint statement with Google states that Apple Intelligence will continue to run on Apple devices and Private Cloud Compute while maintaining Apple’s privacy standards, and that user data will not be shared with Google.

When will the Gemini-powered Siri actually be available?

Apple has committed to a 2026 release. Based on current reporting, the features are expected to be previewed at WWDC on June 8, 2026, with a wider rollout alongside iOS 27 in the fall.

Is the ChatGPT integration in Siri going away?

Apple has said it is not making changes to the existing ChatGPT integration for now. Both integrations may coexist, though how long Apple maintains two external AI partners is an open question.

Will Siri be able to hold a full back-and-forth conversation?

That is one of the core improvements expected in iOS 27. Bloomberg reporting describes a chatbot-style Siri with persistent conversation history, functioning similarly to standalone AI apps like ChatGPT or Gemini.

Does Gemini in Siri mean I can use Google services from Siri?

No. Apple has clarified that Siri uses Gemini as the underlying model, not as a gateway to Google services. You will not be accessing Gmail or Google Search through Siri; the model just powers Siri’s own reasoning capabilities.

If you've any thoughts on Siri is getting Gemini, but It’s not the upgrade you think, then feel free to drop in below comment box. Also, please subscribe to our DigitBin YouTube channel for videos tutorials. Cheers!